This is the introductory post of my new project called AutoDrone (the name is hopefully WIP).

I was never the person who sparks with ideas for side projects. I like useless funny projects made by others but I can never get myself to make one myself. I need a purpose, a reason or just a goal and a lot of problems along the way.

I find Autonomous Systems really interesting especially UAVs/Drones that’s why I am thinking about working in the defense sector.

DISCLAIMER: This won’t be a moral discussion about developing killing machines for private companies. Everyone has to decide for themselves if they are fine with it.

Back to the UAVs. After talking with my friends and family about it, my brother asked me how I would know that I would like to work on these things and why I don’t just buy or build a drone myself. I don’t know about you but that seems like a really reasonable idea and I have no idea why I didn’t do that in the first place.

Since I don’t know much about UAVs I decided to consult our new best friends, the LLMs build by OpenAI and Google about how do actually build UAV. As it turns out the “movement stack” that is the most accessible and apparently also used in production is mostly made by The Dronecode Foundation.

The main parts are:

- “PX4 Autopilot” as the flight controller, which is the thing that will send the right signals to the motors if you say you want to fly forwards,

- MAVLINK, which is the protocol used to send the command “fly forwards” to the flight controller.

- QGroundControl which is like the name says a ground control software where you can plan missions and control you drone and much more.

Not build by the Dronecode foundation but also important are:

- ROS2 (Robot Operating System) for a lot of the heavy lifting a like sensor integration, connecting of different systems, mapping, etc. I was told by an LLM that companies try to remove ROS2 from critical systems since it has to many dependencies/is a huge dependency itself.

- Gazebo/Issac Sim for simulations. I will use Gazebo since I don’t have a 1k NVIDIA GPU.

- Ultralytics YOLO Computer Vision Models for object detection.

The Plan

Build an autonomous drone which is able to detect a person and follow them without trying to recreate one time use drones.

I will use the mentioned software parts above and later I will try to strip away ROS2 as much as possible but first it should work using ROS2.

The Hardware

Current Hardware:

- Huawei Laptop with a AMD Ryzen 5800H and integrated graphics

- Thinkpad X1 Carbon Gen 6, Intel Core i5-8350U

Obviously this is not high end hardware but it should suffice for the start.

Hardware to be bought:

- Holybro x500 v2 Developer Kit: This is a drone kit with PX4 Flight Controller which is well supported and easy to assemble. Keep in mind that my goal is not to build the actual drone but the software stack for it.

- NVIDIA Jetson Orin Nano Super 8GB as an onboard computer. This will handle object detection and SLAM.

- Intel Realsense Depth Camera D455

This comes out at around 1.700€.

Feasibility

We all know that it is feasible just take a look at companies like Helsing, Stark or Anduril. But before I buy anything I wanted to know what the actual scope of this is. So I set out on a reconnaissance mission with Opencode and ChatGPT-5.4.

Sadly ROS2 is only supported on Ubuntu 24.04 and for some odd reason Windows 10???? but I am in luck because I run NixOS and way smarter people than me have build a ROS2 overlay for nix.

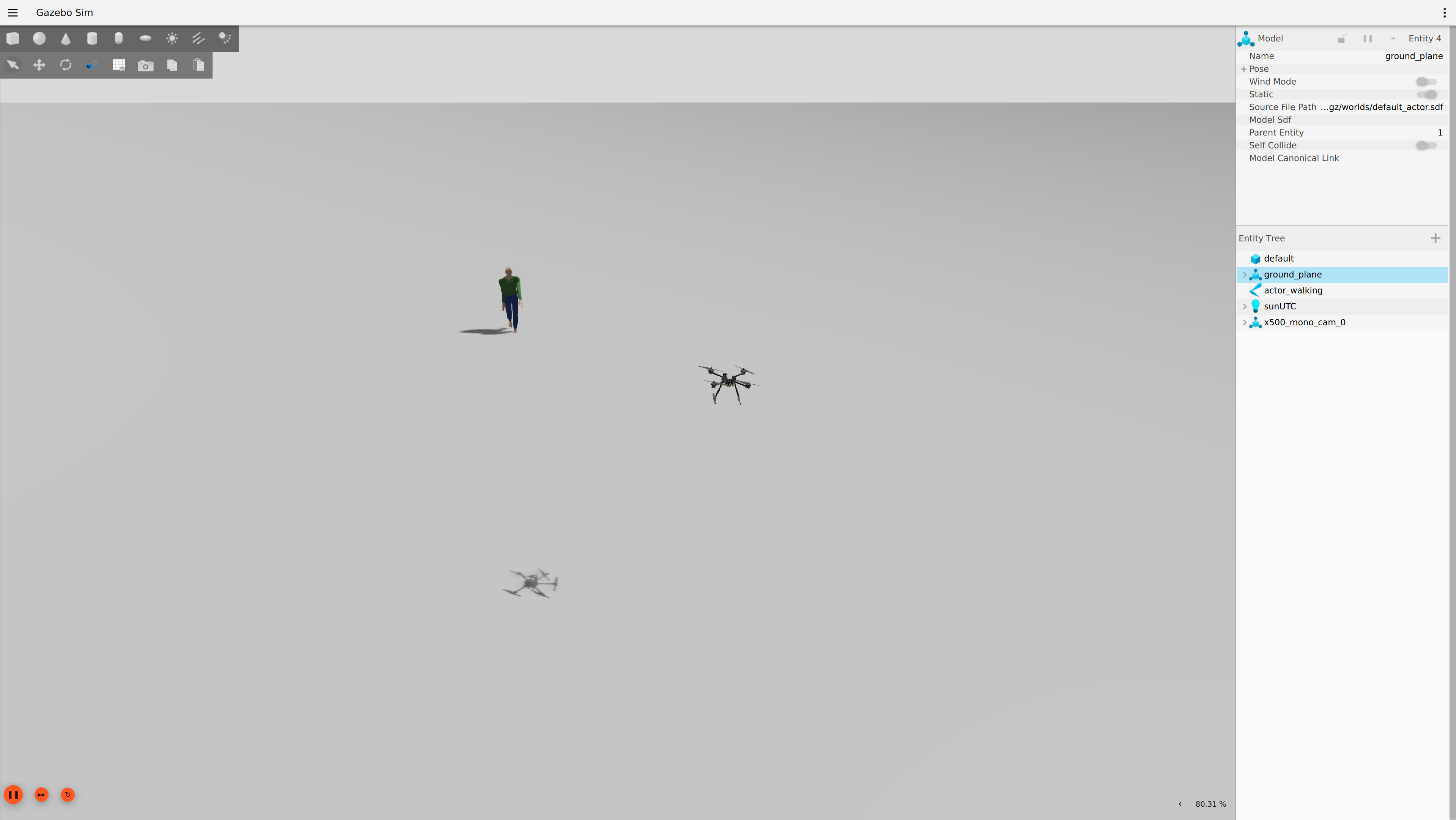

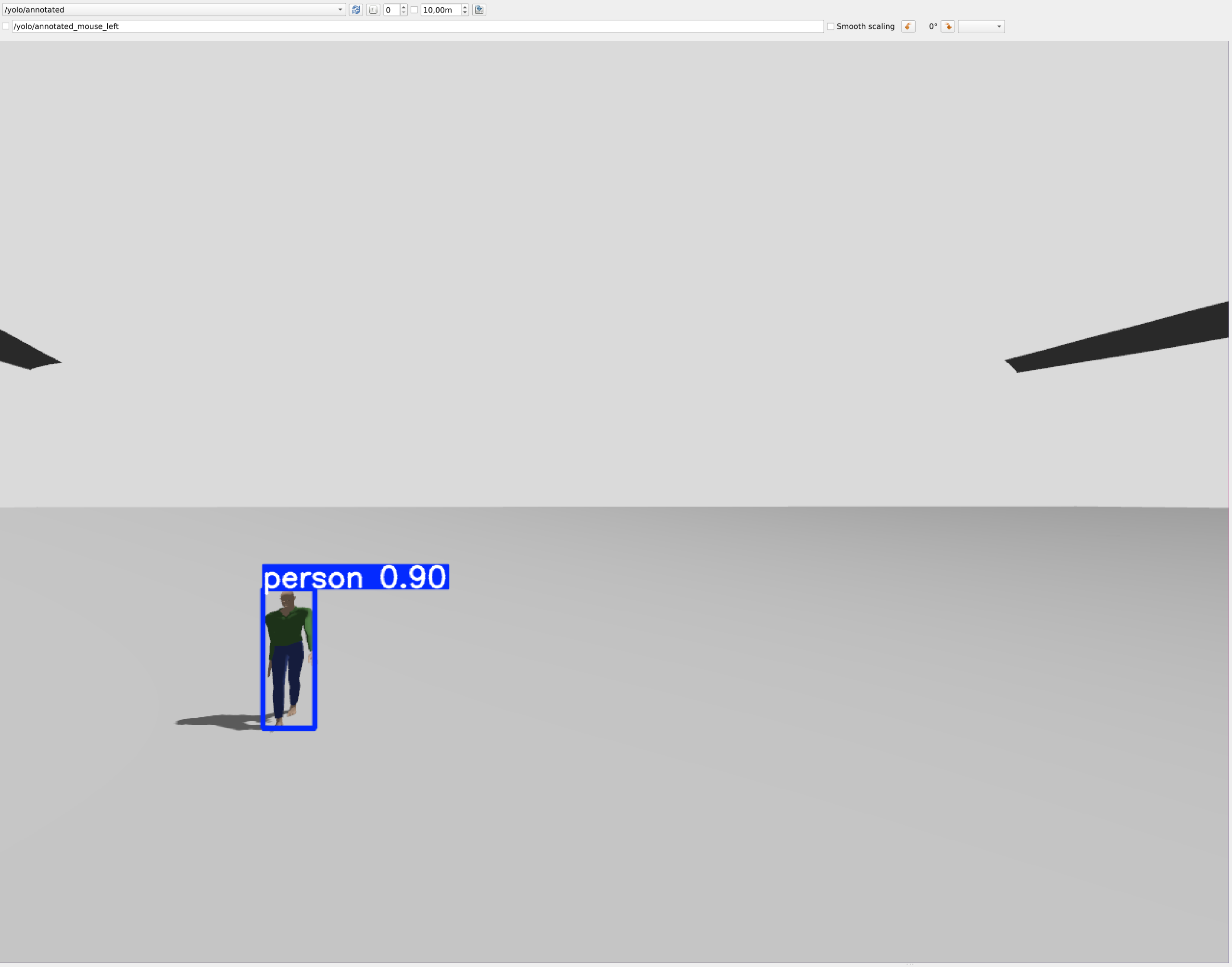

Using this overlay we - the LLMs and myself - were able to build a functioning prototype. A simulated drone in Gazebo which has a camera and streams the images to a ROS2 topic. Then the YOLO26 model runs object detection and publishes a topic with the annotated images.

Figure 1: Gazebo sim

Figure 2: rqt with YOLO26 annotated video feed

It is also kinda able to follow the person but it is janky and the LLM just build it so it tries to keep the rectangle from YOLO26 in the middle of the screen. I actually don’t know yet what the correct logic would be but this solution definitely feels wrong.

Where do we go from here?

Since I now have mental model of the system I want to build, I can see the problems with the vibecoded one. Link to the repository I have now started a new repo where I am building everything with as little help as possible from the LLMs. I still asks questions and let them explain stuff to me but I won’t let them write any code or run to them as soon as something doesn’t work. Why and how specifically is material for another post. Link to the new repository. Since this is a big project I cannot work on all the different domains at the same time. What I will do next:

- Get to know the YOLO models and their pre and postprocessing.

- Integrate it in a C++ ROS2 node and publish the annotated image.

After that I’ll either look into the actual drone states and changes and behavior trees or I’ll buy the Realsense depth camera and try to understand slam and all the stuff I don’t yet know even exists.